The problem

As we know, Delta Chat, and therefore its webxdc implementation, is not really suitable for real-time communication, due to (I assume) the fact that it’s e-mail-based. You can’t send several messages per second, as you would with WebSocket or WebRTC. In fact, currently there is a throttle in deltachat-core that pauses the sending of messages to make it just 1 batch per 10 seconds at a sustained rate.

See, for reference

So, it’s not really possible to write apps such as video-conferencing, or a real-time game with spectators.

Or is it? (Vsauce music starts)

The “solution”

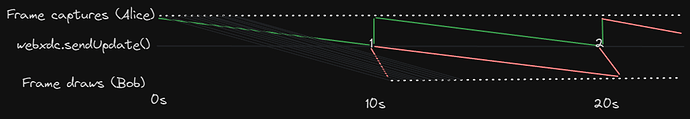

How about embrace the delay, but still ensure the stream-like-ness of the data communication? That is, if we take an example of a video stream, instead of just a one-time-per-10-seconds refresh rate we can have a much higher refresh rate (as high as you like) - by capturing several frames since the last send update, then batching them in one webxdc.sendUpdate, and then spreading the frames over time on the receiving end:

I know this is not a brand-new concept. It is widely used in audio/video communication software, it’s called just “buffer”, I think, and the purpose is exactly the same - increase latency in exchange for consistent, non-interrupting audio/video stream. I’m pretty sure there is a library somewhere made for this, as there is nothing specific to webxdc, or even audio. Maybe it has even been applied in the way that I’m suggesting.

Why?

Firstly: it’s funny. Secondly, it can be useful for stream-like, not too interactive things. Possible examples:

- Actual audio/video communication (terrible) or just streaming (like lectures and stuff) (ok).

- Non-real-multiplayer real-time games (like, say, Tetris), where you can watch other players play.

- Collaborative apps, like the editor we’ve made. For the editor, it could be showing other people type in sorta-real time character by character, and not sentence by sentence.

Some implementation details

- when you send a packet, you mark the time at which you send it (it can be some kind of an offset from the first packet, or actual UNIX time).

- When you receive a

sendMessagebatch of packets, you check the delay (probably gotta be a fixed delay such that the distance between the packets is always the same), check the timestamps of each packet, and give them to the consumer (the video renderer, the game state) with the said delay.

Maybe just reduce the throttle period in Delta Chat?

I don’t know. I’m not sure what’s better for mail protocols and servers - one bigger message every 10 seconds, or 10 smaller ones every second.